Spice v1.1.0 (Mar 31, 2025)

Announcing the release of Spice v1.1.0! 🤖

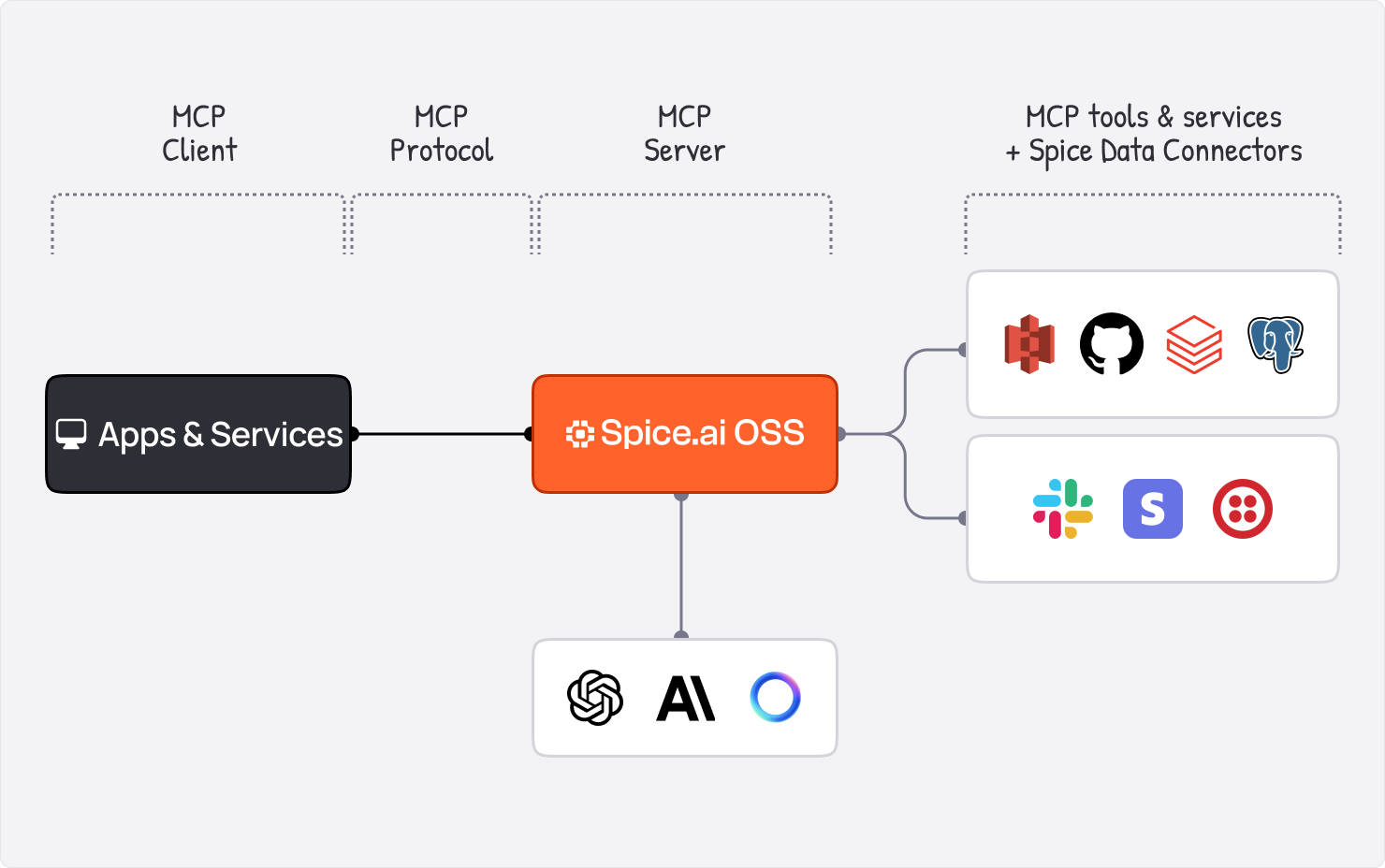

Spice v1.1.0 introduces full support for the Model-Context-Protocol (MCP), expanding how models and tools connect. Spice can now act as both an MCP Server, with the new /v1/mcp/sse API, and an MCP Client, supporting stdio and SSE-based servers. This release also introduces a new Web Search tool with Perplexity model support, advanced evaluation workflows with custom eval scorers, including LLM-as-a-judge, and adds an IMAP Data Connector for federated SQL queries across email servers. Alongside these features, v1.1.0 includes automatic NSQL query retries, expanded task tracing, request drains for HTTP server shutdowns, delivering improved reliability, flexibility, and observability.

Highlights in v1.1.0

-

Spice as an MCP Server and Client: Spice now supports the Model Context Protocol (MCP), for expanded tool discovery and connectivity. Spice can:

- Run stdio-based MCP servers internally.

- Connect to external MCP servers over SSE protocol (Streamable HTTP is coming soon!)

For more details, see the MCP documentation.

Usage

tools:

- name: google_maps

from: mcp:npx

params:

mcp_args: -y @modelcontextprotocol/server-google-mapsSpice as an MCP Server

Tools in Spice can be accessed via MCP. For example, connecting from an IDE like Cursor or Windsurf to Spice. Set the MCP Server URL to

http://localhost:8090/v1/mcp/sse. -

Perplexity Model Support: Spice now supports Perplexity-hosted models, enabling advanced web search and retrieval capabilities. Example configuration:

models:

- name: webs

from: perplexity:sonar

params:

perplexity_auth_token: ${ secrets:SPICE_PERPLEXITY_AUTH_TOKEN }

perplexity_search_domain_filter:

- docs.spiceai.org

- huggingface.coFor more details, see the Perplexity documentation.

-

Web Search Tool: The new Web Search Tool enables Spice models to search the web for information using search engines like Perplexity. Example configuration:

tools:

- name: the_internet

from: websearch

description: 'Search the web for information.'

params:

engine: perplexity

perplexity_auth_token: ${ secrets:SPICE_PERPLEXITY_AUTH_TOKEN }For more details, see the Web Search Tool documentation.

-

Eval Scorers: Eval scorers assess model performance on evaluation cases. Spice includes built-in scorers:

match: Exact match.json_match: JSON equivalence.includes: Checks if actual output includes expected output.fuzzy_match: Normalized subset matching.levenshtein: Levenshtein distance.

Custom scorers can use embedding models or LLMs as judges. Example:

evals:

- name: australia

dataset: cricket_questions

scorers:

- hf_minilm

- judge

- match

embeddings:

- name: hf_minilm

from: huggingface:huggingface.co/sentence-transformers/all-MiniLM-L6-v2

models:

- name: judge

from: openai:gpt-4o

params:

openai_api_key: ${ secrets:OPENAI_API_KEY }

system_prompt: |

Compare these stories and score their similarity (0.0 to 1.0).

Story A: {{ .actual }}

Story B: {{ .ideal }}For more details, see the Eval Scorers documentation.

-

IMAP Data Connector: Query emails stored in IMAP servers using federated SQL. Example:

datasets:

- from: imap:[email protected]

name: emails

params:

imap_access_token: ${secrets:IMAP_ACCESS_TOKEN}For more details, see the IMAP Data Connector documentation.

-

Automatic NSQL Query Retries: Failed NSQL queries are now automatically retried, improving reliability for federated queries. For more details, see the NSQL documentation.

-

Enhanced Task Tracing: Task history now includes chat completion IDs, and runtime readiness is traced for better observability. Use the

runtime.task_historytable to query task details. See the Task History documentation. -

Vector Search with Keyword Filtering: The vector search API now includes an optional list of keywords as a parameter, to pre-filter SQL results before performing a vector search. When vector searching via a chat completion, models will automatically generate keywords relevant to the search. See the Vector Search API documentation.

-

Improved Refresh Behavior on Startup: Spice won't automatically refresh an accelerated dataset on startup if it doesn't need to. See the Refresh on Startup documentation.

-

Graceful Shutdown for HTTP Server: The HTTP server now drains requests for graceful shutdowns, ensuring smoother runtime termination.

New Contributors 🎉

- @Garamda made their first contribution in github.com/spiceai/spiceai/pull/4840

- @sergey-shandar made their first contribution in github.com/spiceai/spiceai/pull/4868

- @benrussell made their first contribution in github.com/spiceai/spiceai/pull/5126

Contributors

- @sgrebnov

- @phillipleblanc

- @peasee

- @Jeadie

- @lukekim

- @benrussell

- @Sevenannn

- @sergey-shandar

- @Garamda

- @johnnynunez

Breaking Changes

No breaking changes.

Cookbook Updates

The Spice Cookbook now has 74 recipes that make it easy to get started with Spice!

Upgrading

To upgrade to v1.1.0, use one of the following methods:

CLI:

spice upgrade

Homebrew:

brew upgrade spiceai/spiceai/spice

Docker:

Pull the spiceai/spiceai:1.1.0 image:

docker pull spiceai/spiceai:1.1.0

For available tags, see DockerHub.

Helm:

helm repo update

helm upgrade spiceai spiceai/spiceai

What's Changed

Dependencies

- No major dependency changes.

Changelog

- release: Bump chart, and versions for next release by @peasee in <https://github.com/spiceai/spiceai/pull/4464>

- feat: Schedule testoperator by @peasee in <https://github.com/spiceai/spiceai/pull/4503>

- fix: Remove on zero results arguments from benchmarks by @peasee in <https://github.com/spiceai/spiceai/pull/4533>

- fix: Don't snapshot clickbench benchmarks by @peasee in <https://github.com/spiceai/spiceai/pull/4534>

- docs: v1.0.1 release note by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4529>

- Update acknowledgements by @github-actions in <https://github.com/spiceai/spiceai/pull/4535>

- In spiced_docker, propagate setup to publish-cuda by @Jeadie in <https://github.com/spiceai/spiceai/pull/4543>

- Upgrade Rust to 1.84 by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4541>

- Upgrade dependencies by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4546>

- Revert "Use OpenAI golang client in `spice chat` (#4491)" by @Jeadie in <https://github.com/spiceai/spiceai/pull/4564>

- feat: add schema inference for the Spice.ai Data Connector by @peasee in <https://github.com/spiceai/spiceai/pull/4579>

- Remove 'tools: builtin' by @Jeadie in <https://github.com/spiceai/spiceai/pull/4607>

- feat: Add initial IMAP connector by @peasee in <https://github.com/spiceai/spiceai/pull/4587>

- feat: Add email content loading by @peasee in <https://github.com/spiceai/spiceai/pull/4616>

- feat: Add SSL and Auth parameters for IMAP by @peasee in <https://github.com/spiceai/spiceai/pull/4613>

- Change /v1/models to be OpenAI compatible by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4624>

- Use `pdf-extract` crate to extract text from PDF documents by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4615>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4628>

- Add 1.0.2 release notes by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4627>

- Fix cuda::ffi by @Jeadie in <https://github.com/spiceai/spiceai/pull/4649>

- Update spicepod.schema.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4654>

- fix: Spice.ai schema inference by @peasee in <https://github.com/spiceai/spiceai/pull/4674>

- Add SQL Benchmark with sample eval configuration based on TPCH by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4549>

- Update Helm chart to Spice v1.0.2 by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4655>

- Update v1.0.2 release notes by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4639>

- Fix E2E AI release install test on self-hosted runners (macos) by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4675>

- Main performance metrics calculation for Text to SQL Benchmark by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4681>

- Add eval datasets / test scripts for model grading criteria by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4663>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4684>

- Add testoperator for `evals` running by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4688>

- Add GH Workflow to run Text to SQL benchmark by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4689>

- Add 1.0.2 as supported version to SECURITY.md by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4695>

- Text-To-SQL benchmark: trace failed tests by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4705>

- Text-To-SQL benchmark: extend list of benchmarking models by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4707>

- Text-To-SQL: increase sql coverage, add more advanced tests by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4713>

- Use model that supports tools in hf_test by @Jeadie in <https://github.com/spiceai/spiceai/pull/4712>

- Fix Spice.ai E2E test by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4723>

- Return non-existing model for v1/chat endpoint by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4718>

- Update Helm chart for 1.0.3 by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4742>

- Update dependencies by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4740>

- Update spicepod.schema.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4744>

- Update SECURITY.md with 1.0.3 by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4745>

- Add basic smoke test of perplexity LLM to llm integration tests. by @Jeadie in <https://github.com/spiceai/spiceai/pull/4735>

- Don't run integration tests on PRs when only CLI is changed by @Jeadie in <https://github.com/spiceai/spiceai/pull/4751>

- Prompt user to upgrade through brew / do another clean install when spice is installed through homebrew / at non-standard path by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4746>

- feat: Search with keyword filtering by @peasee in <https://github.com/spiceai/spiceai/pull/4759>

- Fix search benchmark by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4765>

- feat: Add IMAP access token parameter by @peasee in <https://github.com/spiceai/spiceai/pull/4769>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4774>

- Mark trunk builds as unstable by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4776>

- feat: Release Spice.ai RC by @peasee in <https://github.com/spiceai/spiceai/pull/4753>

- fix: Validate columns and keywords in search by @peasee in <https://github.com/spiceai/spiceai/pull/4775>

- Run models E2E tests on PR by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4798>

- fix: models runtime not required for cloud chat by @peasee in <https://github.com/spiceai/spiceai/pull/4781>

- Only open one PR for openapi.json by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4807>

- docs: Release IMAP Alpha by @peasee in <https://github.com/spiceai/spiceai/pull/4797>

- Add Results-Cache-Status to indicate query result came from cache by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4809>

- Initial spice cli e2e tests with spice upgrade tests by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4764>

- Log CLI and Runtime Versions on startup by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4816>

- Sort keys for openai by @Jeadie in <https://github.com/spiceai/spiceai/pull/4766>

- Remove docs index trigger from the endgame template by @ewgenius in <https://github.com/spiceai/spiceai/pull/4832>

- Release notes for v1.0.4 by @Jeadie in <https://github.com/spiceai/spiceai/pull/4827>

- Update SECURITY.md by @Jeadie in <https://github.com/spiceai/spiceai/pull/4829>

- Update spicepod.schema.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4831>

- Don't print URL by @lukekim in <https://github.com/spiceai/spiceai/pull/4838>

- add 'eval_run' to 'spice trace' by @Jeadie in <https://github.com/spiceai/spiceai/pull/4841>

- Run benchmark tests w/o uploading test results (pending improvements) by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4843>

- Fix 'actual" and "output" columns in `eval.results`. by @Jeadie in <https://github.com/spiceai/spiceai/pull/4835>

- Fix string escaping of system prompt by @Jeadie in <https://github.com/spiceai/spiceai/pull/4844>

- update helm chart to v1.0.4 by @Jeadie in <https://github.com/spiceai/spiceai/pull/4828>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4806>

- fix: Skip sccache in PR for external users by @peasee in <https://github.com/spiceai/spiceai/pull/4851>

- fix: Return BAD_REQUEST when not embeddings are configured by @peasee in <https://github.com/spiceai/spiceai/pull/4804>

- Debug log cuda detection failure in spice by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4852>

- fix: Set RUSTC wrapper explicitly by @peasee in <https://github.com/spiceai/spiceai/pull/4854>

- Improve trace UX for `ai_completion`, fix infinite tool calls by @Jeadie in <https://github.com/spiceai/spiceai/pull/4853>

- Allow homebrew spice cli to upgrade the runtime by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4811>

- Add support for MCP tools by @Jeadie in <https://github.com/spiceai/spiceai/pull/4808>

- fix: Rustc wrapper actions by @peasee in <https://github.com/spiceai/spiceai/pull/4867>

- Provide link to supported OS list when user platform is not supported by @Garamda in <https://github.com/spiceai/spiceai/pull/4840>

- Always download spice runtime version matched with spice cli version by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4761>

- Disable flaky integration test by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4871>

- fix: sccache actions setup by @peasee in <https://github.com/spiceai/spiceai/pull/4873>

- Fixing Go installation in the setup script for Linux Arm64 by @sergey-shandar in <https://github.com/spiceai/spiceai/pull/4868>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4864>

- DuckDB acceleration: Use temp table only for append with conflict resolution by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4874>

- Trace the output of streamed `chat/completions` to runtime.task_history. by @Jeadie in <https://github.com/spiceai/spiceai/pull/4845>

- Always pass `X-API-Key` in spice api calls header if detected in env by @ewgenius in <https://github.com/spiceai/spiceai/pull/4878>

- Revert "DuckDB acceleration: Use temp table only for append with conflict resolution" by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4886>

- Allow overriding spicerack base url in the CLI by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4892>

- Add test Spicepod for DuckDB full acceleration with constraints by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4891>

- Refactor Parameter Handling by @Advayp in <https://github.com/spiceai/spiceai/pull/4833>

- Add test Spicepod for DuckDB append acceleration with constraints by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4898>

- Update to latest async-openai fork. Update secrecy by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4911>

- Fix mcp tools build by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4916>

- Add more test spicepods by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4923>

- task: Add more dispatch files by @peasee in <https://github.com/spiceai/spiceai/pull/4933>

- run spiceai benchmark test using test operator by @Sevenannn in <https://github.com/spiceai/spiceai/pull/4920>

- Convert sequential search code block to parallel async by @Garamda in <https://github.com/spiceai/spiceai/pull/4936>

- fix: Throughput metric calculation by @peasee in <https://github.com/spiceai/spiceai/pull/4938>

- Update dependabot dependencies & `cargo update` by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4872>

- Improve servers shutdown sequence during runtime termination by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4942>

- Semantic model for views. Views visible in `table_schema` & `list_datasets` tools. by @Jeadie in <https://github.com/spiceai/spiceai/pull/4946>

- update openai-async by @Jeadie in <https://github.com/spiceai/spiceai/pull/4948>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4961>

- fix: Redundant results snapshotting by @peasee in <https://github.com/spiceai/spiceai/pull/4956>

- Create schema for views if not exist by @Jeadie in <https://github.com/spiceai/spiceai/pull/4957>

- Bump Jimver/cuda-toolkit from 0.2.21 to 0.2.22 by @dependabot in <https://github.com/spiceai/spiceai/pull/4969>

- List available operations in `spice trace <operation>` by @Jeadie in <https://github.com/spiceai/spiceai/pull/4953>

- Initial commit of release analytics by @lukekim in <https://github.com/spiceai/spiceai/pull/4975>

- Remove spaces from CSV by @lukekim in <https://github.com/spiceai/spiceai/pull/4977>

- Fix Spice pods watcher by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4984>

- feat: Add appendable data sources for the testoperator by @peasee in <https://github.com/spiceai/spiceai/pull/4949>

- Omit timestamp when warning regarding datasets with hyphens by @Advayp in <https://github.com/spiceai/spiceai/pull/4987>

- Update helm chart to v1.0.5 by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4990>

- docs: Update qa_analytics.csv by @peasee in <https://github.com/spiceai/spiceai/pull/4989>

- Update end_game template by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4991>

- Update spicepod.schema.json by @github-actions in <https://github.com/spiceai/spiceai/pull/4993>

- Add v1.0.5 release notes by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4994>

- Supported Versions: include v1.0.5 by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4995>

- Dependabot updates by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/4992>

- Switch to basic markdown formatting for vector search by @sgrebnov in <https://github.com/spiceai/spiceai/pull/4934>

- docs: Update qa_analytics.csv by @peasee in <https://github.com/spiceai/spiceai/pull/5001>

- feat: Add TPCDS FileAppendableSource for testoperator by @peasee in <https://github.com/spiceai/spiceai/pull/5002>

- Update `ring` by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/5003>

- docs: Update qa_analytics.csv by @peasee in <https://github.com/spiceai/spiceai/pull/5006>

- feat: Add ClickBench FileAppendableSource for testoperator by @peasee in <https://github.com/spiceai/spiceai/pull/5004>

- feat: Validate append test table counts by @peasee in <https://github.com/spiceai/spiceai/pull/5008>

- feat: Add append spicepods by @peasee in <https://github.com/spiceai/spiceai/pull/5009>

- Improve Vector Search performance for large content w/o primary key defined by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5010>

- Don't try to downgrade Arc in test_acceleration_duckdb_single_instance by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/5014>

- feat: Add an initial testoperator vector search command by @peasee in <https://github.com/spiceai/spiceai/pull/5011>

- feat: Update testoperator workflows for automatic snapshot updates by @peasee in <https://github.com/spiceai/spiceai/pull/5018>

- Fix Vector Search when additional columns include embedding column by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5022>

- Include test for primary key passed as additional column in Vector Search by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5024>

- fix: Update benchmark snapshots by @github-actions in <https://github.com/spiceai/spiceai/pull/5020>

- upgrade mistral.rs by @Jeadie in <https://github.com/spiceai/spiceai/pull/4952>

- fix: Indexes for TPCDS SQLite Spicepod by @peasee in <https://github.com/spiceai/spiceai/pull/5038>

- fix: Update benchmark snapshots by @github-actions in <https://github.com/spiceai/spiceai/pull/5035>

- Include local files in generated Spicepod package by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5041>

- update mistral.rs to 'spiceai' branch rev by @Jeadie in <https://github.com/spiceai/spiceai/pull/5029>

- Configure spiced as an MCP SSE server by @Jeadie in <https://github.com/spiceai/spiceai/pull/5039>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/5052>

- fix: Disable benchmarks schedule, enable testoperator schedule by @peasee in <https://github.com/spiceai/spiceai/pull/5058>

- fix: Update benchmark snapshots by @github-actions in <https://github.com/spiceai/spiceai/pull/5060>

- Update ROADMAP.md March 2025 by @lukekim in <https://github.com/spiceai/spiceai/pull/5061>

- fix: Testoperator data setup by @peasee in <https://github.com/spiceai/spiceai/pull/5068>

- fix: All HTTP endpoints to hang when adding an invalid dataset with --pods-watcher-enabled by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5050>

- fix: Update benchmark snapshots by @github-actions in <https://github.com/spiceai/spiceai/pull/5073>

- Integration tests for MCP tooling by @Jeadie in <https://github.com/spiceai/spiceai/pull/5053>

- OpenAPI docs for MCP by @Jeadie in <https://github.com/spiceai/spiceai/pull/5057>

- fix: Acceleration federation test by @peasee in <https://github.com/spiceai/spiceai/pull/5090>

- fix: Allow spiced commit in testoperator dispatch by @peasee in <https://github.com/spiceai/spiceai/pull/5098>

- fix: Use RefreshOverrides for the refresh API definition by @peasee in <https://github.com/spiceai/spiceai/pull/5095>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/5094>

- fix: Increase tries for refresh_status_change_to_ready test by @peasee in <https://github.com/spiceai/spiceai/pull/5099>

- feat: Testoperator reports on max and median memory usage by @peasee in <https://github.com/spiceai/spiceai/pull/5101>

- Update openapi.json by @github-actions in <https://github.com/spiceai/spiceai/pull/5105>

- fix: Fail testoperator on failed queries by @peasee in <https://github.com/spiceai/spiceai/pull/5106>

- Update Helm chart to 1.0.6 by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/5107>

- Update SECURITY.md to include 1.0.6 by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/5109>

- Update spicepod.schema.json by @github-actions in <https://github.com/spiceai/spiceai/pull/5108>

- Add QA analytics for 1.0.6 by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/5110>

- add env variables to tools, usable in MCP stdio by @Jeadie in <https://github.com/spiceai/spiceai/pull/5097>

- HF downloads obey SIGTERM by @Jeadie in <https://github.com/spiceai/spiceai/pull/5044>

- Add v1.0.6 release notes into trunk by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5111>

- Remove redundant mod name for iceberg integration tests by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5112>

- Use fixed data directory for test operator by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5103>

- Improvements for evals by @Jeadie in <https://github.com/spiceai/spiceai/pull/5040>

- Make McpProxy trait for MCP passthrough by @Jeadie in <https://github.com/spiceai/spiceai/pull/5115>

- Properly handle '/' for tool names. by @Jeadie in <https://github.com/spiceai/spiceai/pull/5116>

- Use retry logic when loading tools by @Jeadie in <https://github.com/spiceai/spiceai/pull/5120>

- Exclude slow tests from regular pr runs by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5119>

- Fix test operator snapshot update by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5130>

- spice init: Fixes windows bug where full path is used for spicepod name by @benrussell in <https://github.com/spiceai/spiceai/pull/5126>

- fix: Update benchmark snapshots by @github-actions in <https://github.com/spiceai/spiceai/pull/5131>

- Implement graceful shutdown for HTTP server by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5102>

- Update enhancement.md by @lukekim in <https://github.com/spiceai/spiceai/pull/5142>

- Add GitHub Workflow and PoC Spicepod configuration to run FinanceBench tests by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5145>

- Fix Postgres and MySQL installation on macos14-runner (E2E CI) by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5155>

- De-duplicate attachments in DuckDBAttachments by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/5156>

- v1.0.7 release note by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5153>

- Update spicepod.schema.json by @github-actions in <https://github.com/spiceai/spiceai/pull/5160>

- Update Helm chart to 1.0.7 by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5159>

- Add github token to macos test release download tasks by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5161>

- update security.md for 1.0.7 by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5162>

- Update roadmap.md by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5163>

- Add a performance comparison section for 1.0.7 by @phillipleblanc in <https://github.com/spiceai/spiceai/pull/5164>

- docs: Add snafu error variant point to style guide by @peasee in <https://github.com/spiceai/spiceai/pull/5167>

- Fix 1.0.7 release note by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5168>

- Adjust DuckDB connection pool size based on DuckDB accelerator instances usage by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5117>

- Add automatic retry for NSQL queries by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5169>

- Include chat completion id to task history by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5170>

- Trace when all runtime components are ready by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5171>

- Update qa_analytics.csv for 1.0.7 by @Sevenannn in <https://github.com/spiceai/spiceai/pull/5165>

- Set default tool recursion limit to 10 to prevent infinite loops by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5173>

- Add support for `schema_source_path` param for object-store data connectors by @sgrebnov in <https://github.com/spiceai/spiceai/pull/5178>

- Run license check and check changes on self-hosted macOS runners by @lukekim in <https://github.com/spiceai/spiceai/pull/5179>

- Add MCP by @lukekim in <https://github.com/spiceai/spiceai/pull/5183>

Full Changelog: github.com/spiceai/spiceai/compare/v1.0.0...release/1.1